Creation requires intent

On reconnecting with the reasons we make things

I. Seven million years ago, in the dense forests of East Africa, a family of apes diverged.

One group drifted west, retreating deeper into the jungle. There they found empty niches, plenty of food. Stability. Their descendants became the chimpanzees.

The other group remained in the east. Over time, they saw their world gradually change. Thick woodlands, once abundant, were giving way to open savanna. These eastern apes, climbers by nature, were left with little choice. They descended. Their children stood to walk upright. By less than four million years ago, upright apes were scattered across eastern Africa.

Then, on the shores of Lake Turkana, an ape picked up a stone with intention.

We must imagine what happened next. The stone was heavy, volcanic. From a distance, you would see the outline of a person, arms raised.

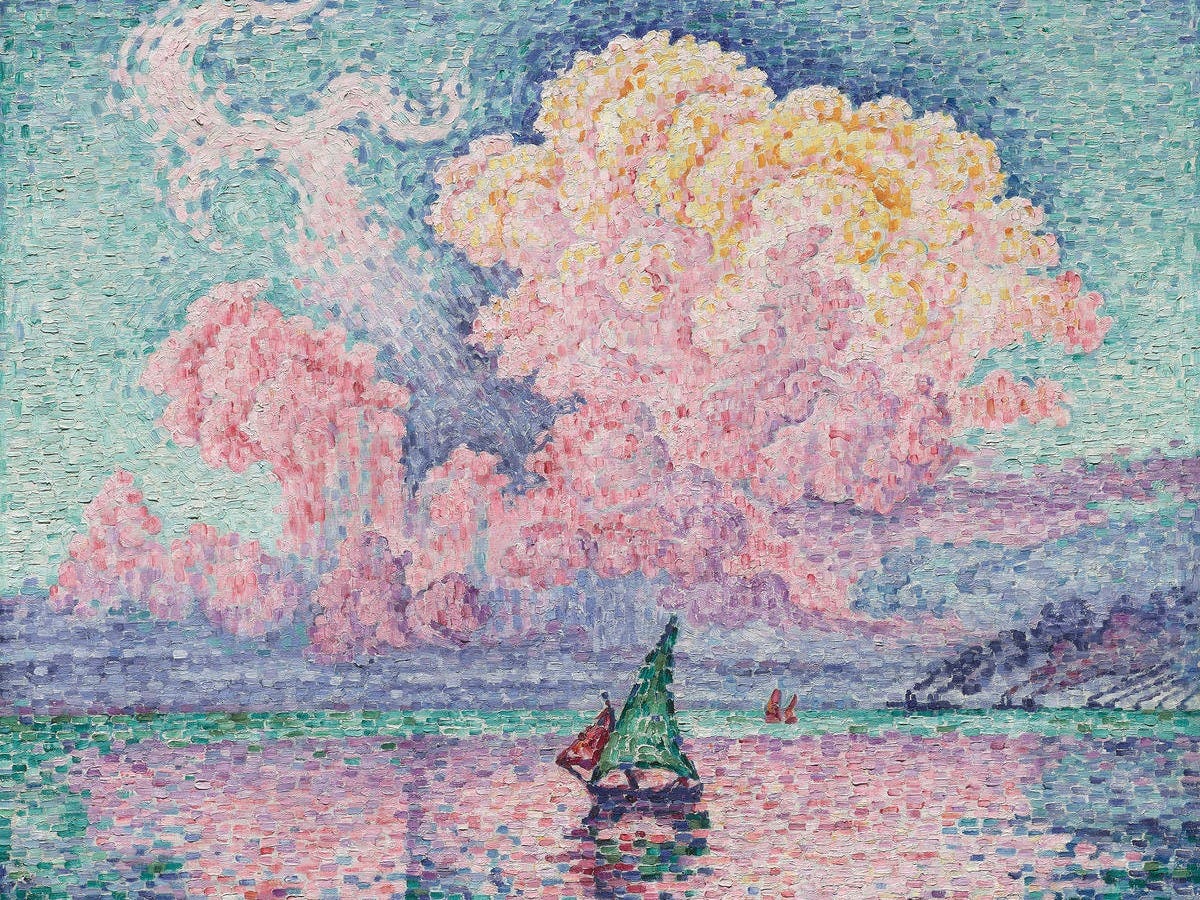

They bring the stone down hard; flakes of volcanic rock shear, scatter through the air. Sunlight shines through the thinning canopy, revealing the gleaming edge hidden inside. Behold the Lomekwian: our first tool.

With this new tool, the apes cut meat off scavenged bones, adding crucial protein to their diets. This helped fuel brain growth, which allowed them to adapt and produce more sophisticated tools. Their descendants were able to break animal bones apart, accessing rich bone marrow. Eventually, they transformed from scavengers to hunters, and created new physical and social tools to adapt to their rapidly-changing environment. The cycle spun for millions of years, and here we are.

The ape looked at a rock and imagined what it could become, then made it. This is an act without instinct, one that requires imagination: the first creative act on record.1

This is a story about the moment before the stone comes down.

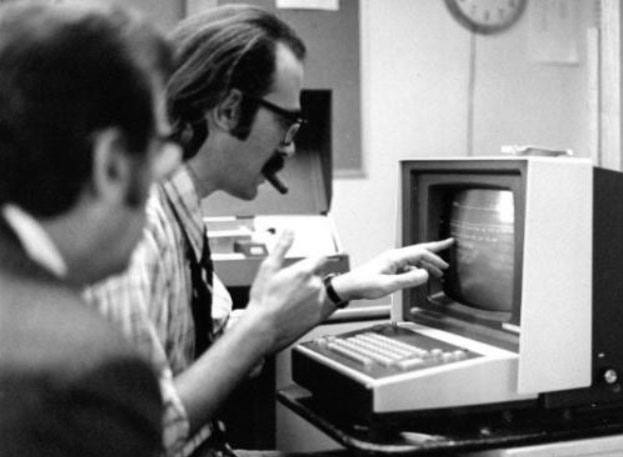

II. In 1968, Dr. Larry Weed published a paper in the New England Journal titled “Medical Records That Guide and Teach.”

The title was his thesis: the medical record should not be a container for information, it should be an engine for knowledge. Data about the patient should organize around problems, the problems structure the physician’s thinking, the thinking generates better data.

Weed was an inventor. He created the problem-oriented medical record, the SOAP note, the problem list. He understood that the medical record was not just a system of record, it was a system of work. And he believed that if you got the workflow right, the record would guide the clinician.

In the 1970s he built PROMIS, one of the first electronic health record systems. It did what the paper described: organized knowledge around problems, connected clinical evidence to patient data, fed conclusions back into the record.

The idea was elegant, but the execution was held back by its time. PROMIS needed its own dedicated terminals and mainframe. This made it inaccessible to all but the largest academic institutions. But an electronic record in isolation is just an expensive filing cabinet. The value required a network.

In 2008, medicine still ran on paper. Fewer than one in ten American hospitals had an electronic health record system. When the economy collapsed, Congress added $35 billion to a stimulus package with the intention to drag healthcare into the digital age. The big idea: pay hospitals to adopt a (government-certified) electronic system, penalize those who refused. By 2015, 95% of hospitals had an EHR.

This is not the market inspiring a crowd of competing solutions. This is a square peg being crammed into a very abstract hole. Was the technology finally ready for the needs of clinical medicine?

A few years ago, I called a physician friend to help me prepare for an interview centered on “things I would change about healthcare.” We’ve known each other since fourth grade. As teenagers, we took Hindu philosophy classes on the weekends and often got into excited arguments. I could trust him to have a thoughtful, empathetic take. So I asked him, what would you change about healthcare?

He told me that his experience in medicine had given him a “moral injury.” His motivation to be a doctor was altruistic, but he was increasingly demoralized by the financial incentives that shaped his every day and limited the care he could provide for his patients.

I met with another friend, a pediatrician trained in Boston. We were sitting on the stairs, watching our kids switch between playing blocks and throwing them at one another, when she confided that she made a mistake choosing medicine. She’d left her fellowship to start practicing in Roxbury. I also spoke to an emergency room physician who left medicine to teach, and a friend who dropped out of medical school for a job in tech.

In the interview, I said, “Doctors and nurses aren’t just experiencing burnout—how they frame the world is being completely rewritten.” I wanted the audience to understand: these are not people who starve for resilience. These are people who wanted to be doctors to heal and to think. They are the physicians that Weed wanted to help.

The federal incentive to adopt EHRs was an input mandate; the government paid for a tool instead of incentivizing ideal outcomes, like patient or population health. Weed’s architecture, a system for reasoning and iterative learning, became an assembly line.

Once in college, I was walking with a girl I was trying to impress in the East Village. She asked me if I had read any Foucault. “The pendulum guy?” I replied. Apparently not.

Years later, I thought of that moment, which had stuck with me (how could it not) when in a bookshop I came upon a copy of The Subject and Power. In it, I found a framework that helped me understand what went wrong. Foucault had described this kind of regulatory intervention decades prior: “To govern, in this sense, is to structure the possible field of action of others.”2

Foucault argued that modern power operates through the organization of what is possible in a system, not through force or coercion. Regulating the adoption of electronic systems was a show of power, not progress. Worse, by defining the process, they limited the field of action. Instead of imagining what we could have built, they assumed the tool was a proxy for progress.

In 2008, the dominant vendor saw an opportunity; it built a system of record so large that it became gravitational; 250 million lives maintained by a company that competes with others trying to serve those lives. Workflow that crosses the boundary a system of record requires its permission to do so. This limits competition: solutions that can’t talk to the EHR can’t threaten the EHR. Attempts at regulation fail.

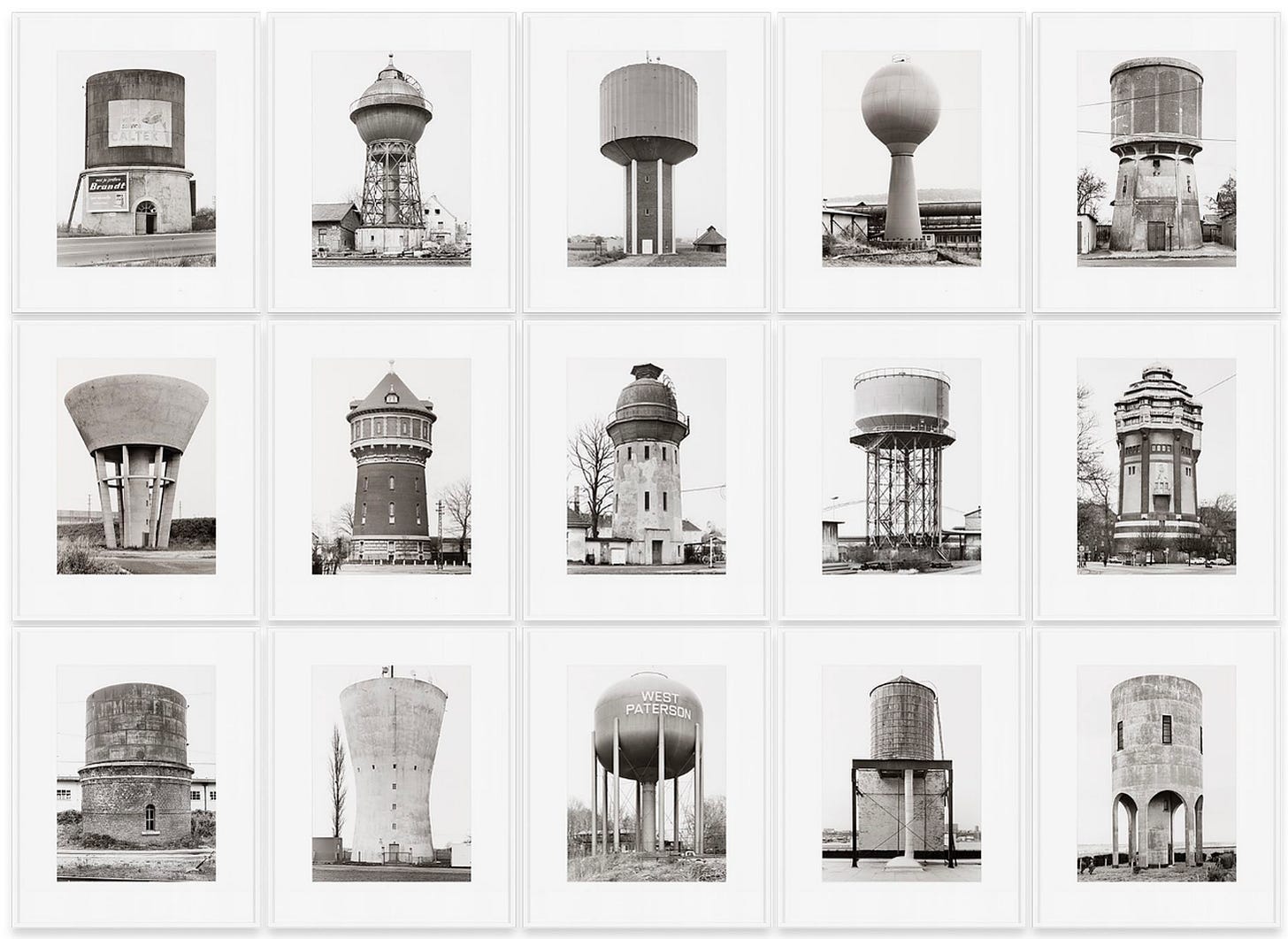

Into this captured market, venture invested billions of dollars. It’s hard to build workflow, so you build point solutions instead. On paper, it fits the model: start small, MVP, build a base, build out. In practice, every inch you claim is a bareknuckle fight. To date, there have been over a thousand venture-funded digital health companies. CIOs field dozens of vendor pitches a month. A tool for every pain point, plumbing upon plumbing.

When systems fail, everyday people become the connective tissue that make it work. They must click and drag and drop and fax their way to what they need. Parts of the system are repurposed to assist them; the SOAP note became a billing instrument, the problem list became a checkbox.

The system then becomes a funhouse mirror of itself. A billing auditor tells a physician to add “pupils equally round and reactive to light” to a clinical note, not because it makes a lick of sense, but because it will allow for a higher billing code.

The people impacted most are our independent healthcare providers. A five-physician primary care group serving a rural community does not have a CIO, it has an office manager who also handles HR. They cannot negotiate API access or hire an integration engineer. Kaiser can absorb a thousand cloud applications; your neighborhood family doctor can manage three. People cover the gaps in the workflow. They’re routers, translators, administrators.

Physicians and nurses spend hours on menial tasks. They’re working on notes they couldn’t finish during the visit because they were wrestling with software. In the office, at home after the kids are asleep. Gaps in the workflow became an equity gap.

Into this mess enters the ambient scribe, promising something different.

First-generation scribes listen to physicians and draft their notes for them. But when you expand from there, something creative happens. Because documentation is integral to billing, the scribe can act as a lever for physicians to get paid, if it can get into the workflow. When the scribe feeds coding feeds billing feeds claims feeds revenue, you’re not just saving time, you help practices thrive.

What makes scribes different from solutions of old? The better they work, the less attention they demand of their users. And as AI scribes become increasingly agentic, capable of acting properly on behalf of care providers, the more they will reduce unnecessary work. This is the workflow serving the clinician.

In 1982, Weed founded another clinical software company. It was eventually acquired by Sharecare, a consumer wellness platform co-founded by Dr. Oz. In 2011, at age 87, Weed and his son published a book called Medicine in Denial. The lesson he’d learned over forty years was that “[m]isdiagnoses are not failures of individual physicians. They are failures of a non-system that imposes burdens too great for physicians to bear.” When asked whether he was optimistic, Weed said: “Based on what I know about all the vested interests in the present medical education system and in the present practice of medicine, I am not optimistic such changes will be forthcoming.” Weed died in 2017 at 93.

Recently, I visited my ENT, a British fella trained in America, for a surgical follow-up. He had a specialized camera in my sinus that let him find and remove debris in one maneuver. A TV in my sightline showed the camera’s feed in real-time.

While trying not to sneeze, I asked him how he liked his training. He liked it fine, he said. During fellowship, he’d had the chance to design and build his own medical device. The existing tools weren’t good enough.

“What device was that?” I asked. “It’s currently up your nose,” he smiled.

As he finished up, I asked him what his next project was. He’d recently bought a GPU server to train his own ambient scribe, one that met his quality bar. He had lots of ideas too, wanted to see what else he could plug it into. Weed would have recognized him immediately.

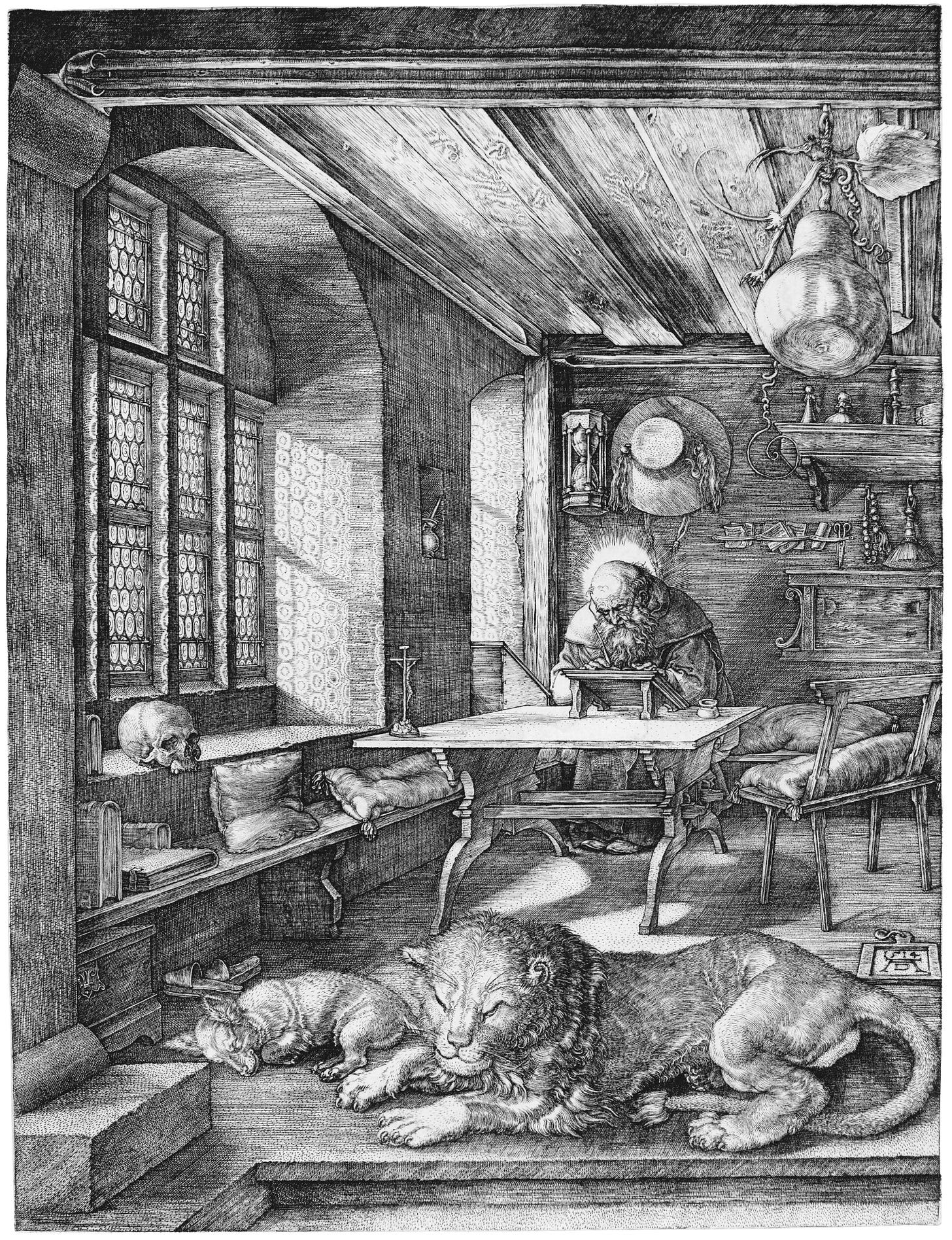

III. When you refuse to take a pill for something, you reach for a book.

My “something” was a deep sense that I had lost my purpose. I was stuck in a job I no longer enjoyed. The workaholic in me was angry; it could handle abuse if the mission was worth it, but it was increasingly, obviously, despairingly not.

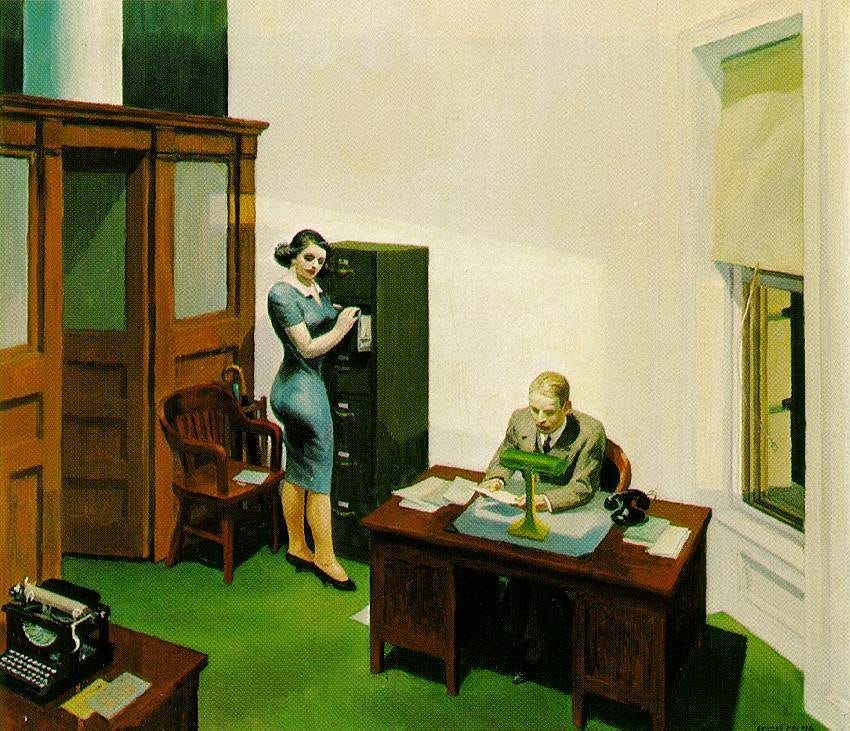

An analyst formatting a report until 2am for a meeting that will be rescheduled in the morning. A researcher preparing a deck for a presentation few will show up to. A product manager updating a kanban board that no one will look at.

I have been all these things.

I did these jobs because I saw a gap between what mattered and what was seen and rewarded. And the longer I tried to cover the gap, the harder it was to remember that I was hired to think.

Rather than allowing myself to churn, I locked into research mode and found the late social anthropologist David Graeber. In the 2010s, he interviewed people from different industries and developed a theory about the nature of modern work, and how far it has drifted from purpose. He glibly called the kind of jobs I was doing bullshit jobs, and said they “regularly induce feelings of hopelessness, depression, and self-loathing.”

Graeber concluded that this kind of work isn’t a bug, it’s a feature. The friction of producing results fills time, and the filled time protects everyone from introspection (we have another, less academic term for this: busywork).

Despite the tone, Graeber seemed empathetic and deeply serious about the consequences of doing a job that you believe has no meaning. He wrote that it amounted to a “spiritual violence directed at the essence of what it means to be a human being.”

How did we get to the point that there were enough people who said, yeah I work a job like this, to merit serious study? Graeber himself wonders aloud, how did we as a people get stuck?3

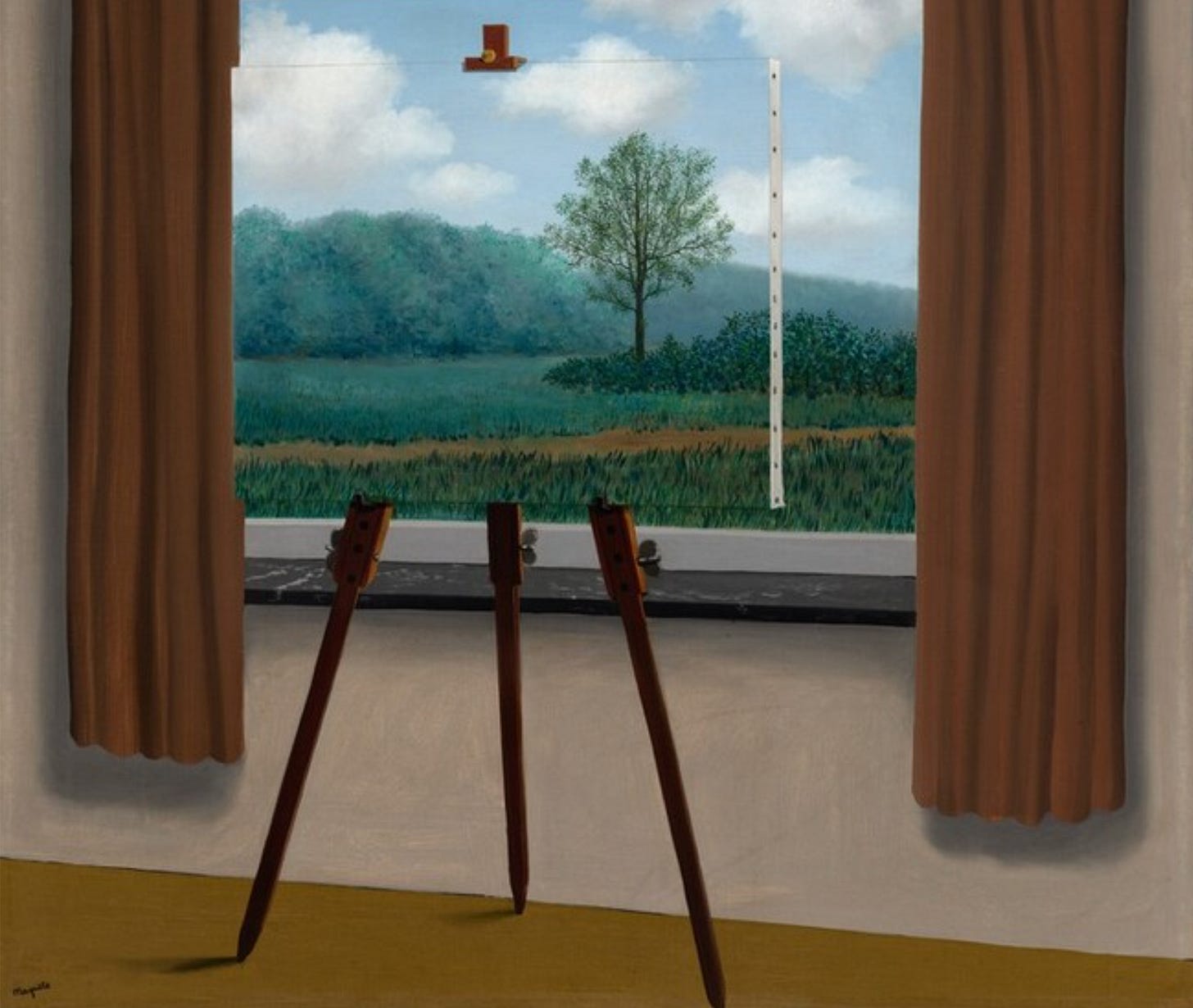

By chance, I also discovered Jean Baudrillard (ok I was scrolling Instagram4), and picked up a copy of Simulacra and Simulation. In it, Baudrillard wrote: “It is no longer a question of imitation, nor of reduplication, nor even of parody. It is rather a question of substituting signs of the real for the real itself.” — It being existence.

I felt what he described: meetings to plan meetings, spreadsheets that exist so the spreadsheet can be updated. Work that refers only to other work.

Now I had a working theory of why I felt purposeless; Foucault named the cause, Baudrillard named the effect, Graeber named the consequence. The system we inhabit forces us into a job that becomes a pantomime. It satisfies your observers, but you know you’re living a simulation. You become the apparatus substituting signs of the real. You take a moral injury from the spiritual violence.

At this point, I had a diagnosis, but I didn’t have a name for what I was missing, or a prescription for what to do about it.

IV. As a PhD student, I wanted to make learning faster.

I wanted to create better tools, algorithms, and analytical methods. This desire peaked in 2015 when I was enamored by the promise of machine learning. It was exactly the right moment for someone with basic Python and statistics knowhow to become a data scientist.

Plus I needed a clean break from benchwork. I no longer enjoyed the methodical, backbreaking process of collecting data (I used to do microsurgery on fruit flies, buy me a drink before you ask me about it). But as a data scientist, I would have perfect posture and, with the right spreadsheet and methods, predict anything.

From there, I joined a genomics startup at peak sequencing fever. The prevailing hypothesis: if researchers understood genetic mutations better, we would understand disease better and cure it faster. Our startup was building sophisticated tools for the step between sequencing biological tissue and understanding what its genetic code meant. The plumbing we provided was essential, we believed.

Stubbornly, I learned that few customers cared about the plumbing, they cared about the insights. Meticulous product details that we labored over were disregarded or dismissed.

Around the time I left, Google released DeepVariant, a mutation detector5 that collapsed layers of complex, pedagogical algorithms into a single model. Obscenely, it effectively did what clinical testing companies were quietly doing, having rooms of people look at gene sequencing data to spot the mutations. It did this using computer vision instead of interns.

I realized I had to be more discerning with the problems I chose.

My next job was a diagnostics company, much closer to the action. My team provided data and insights on cancer mutations and patient outcomes to companies developing cancer therapies. One morning, I met with colleagues at the campus of one of our largest clients. They wanted to share what they were learning.

We were huddled excitedly around the walnut table. Their senior analyst pulled up a slide and proudly explained their discovery: the older someone was, the longer they lived on a cancer therapy.

I slowly looked around the room and met eyes with our biostatistician, whose eyebrows screamed: of course older people live longer, they’ve had time to get older! Whoopsie.

Later, back at the office, putzing around on Twitter, I saw a new paper on transfer learning. It was an elegant idea: train a model where you have lots of data, then fine-tune it in specific domains where you have only a little. Our team had been using this approach on patients with rare cancer types. We were able to more accurately predict which therapies they would respond to; a boon.

This paper had done something like it, but for language, and at a scale that changed the game entirely. They called their invention BERT.

I realized that a lot of the plumbing we’d been building to structure data, package it, sell it—it could completely go away. It was DeepVariant all over. These new language models could collapse the distance between information and understanding; incrementally at first, then exponentially. I couldn’t predict the specifics, but I saw the outcome.

V. As I write this, AI is collapsing the cost of building software by orders of magnitude.

The workflow that used to require a hundred engineers and three years can now be assembled in months. Perhaps faster.

How should we respond to this moment? There are temptations.

One is to focus on output. Do more, do it faster. But a better hammer does not tell you what to build. We can ship a new widget faster than ever before, but if it creates more burden, more interactions, more checkboxes, more clicks — we have not solved a problem, we have merely added noise. Now we are starting to see the agentic version of this temptation: scaled-up swarm systems burning through millions of tokens, executing at superhuman speed with an AI CPO at the helm. We know what Baudrillard would say; a fancier tool used thoughtlessly accelerates the production of simulacra (faster bullshit is still bullshit).

Another temptation is to optimize the structures we work in today. But dusting a room that a hurricane has blown through won’t get you far. Consider Weed: the EHR mandate defined progress as adoption and cemented a system that violated what he spent his life striving for. Now we risk building a future that inherits those same constraints. We can choose a smarter workflow, a cleaner design. But our sense of what is good is often constrained by the architecture we should be dismantling. Otherwise progress will be incremental, not exponential. Foucault would say that this is the system structuring you when you should be restructuring the system.

Our conclusions reflect us back to ourselves and we mistake them for revelation.

Or we could remove people from the loop entirely. They dislike their jobs? Just take them away. What a failure of imagination; if I offered you a robot army and all you can imagine is them doing jobs someone already does, please give them back. This instinct also misreads the “spiritual violence.” It is not an imposition, it is an absence. A void where purpose once stood that we revolve. Liberating ourselves from our jobs without offering purpose would be its own violence. The harm in purposeless work is that it’s purposeless, not that it is work.

The final temptation is to simply be more of what we already are. It’s hard not to turn a side eye at those doubling down on taste because they see themselves as tastemakers. Hard not to wonder at the builders building new buildings for the sake of building. To suspect that the people who see a future without people need a hug, maybe a warm blanket. Our conclusions reflect us back to ourselves and we mistake them for revelation.6

And now my confession: I have indulged every temptation on this list.

After all this wandering, the question remains: how to find purpose?

When I am wrestling kids in the morning and absorbing someone else’s urgency at work and scrolling on my phone at night, it can be easy to ignore the question.

I have found words to describe my circumstances and (hopefully) avoid past missteps. Still I find myself yearning. The danger is that yearning can be co-opted, twisted, corrupted. It can be commercialized. In times of scarcity, it can be patronized.

But we are not in an age of scarcity. I do not have to lick the boot of a pope in order to buy my canvas. What will I do with that freedom?

VI. “It is always the same: once you are liberated, you are forced to ask who you are.”

Thousands of years before Baudrillard wrote that, and thousands of miles away, on a battlefield in Jyotisar, Krishna spoke to Arjun: “It is better to strive in one’s own dharma than to succeed in the dharma of another.” If I was to rediscover my purpose, my dharma, it had to be my own. Once again, I’m sitting on the carpeted floor of the old community center with my friends, seeking clarity in ancient wisdom.

I didn’t have the words, so I reached for a book. Then I reached for another. Pretty soon, I was reflexively reaching for something to read instead of my phone after the kids went to bed.

When I read lines like — “Sometimes what I wouldn’t give to have us sitting in a bar again at 9:00 a.m. telling lies to one another, far from God.”7 — I wanted to revisit them, to feel what I felt again: revived by the electric burn of a sentence, crafted with intention, by someone who gave a damn for the reader.

So I bought a notebook. In the cool quiet after the final page, I stare at the ceiling and think. Then I write. I turn my notes into reflections, ranging from the snarky (”Camus once wrote, One must imagine Sisyphus happy. What this book presupposes is … maybe he wasn’t?”) to pages on yearning, after being awed by Hua Hsu’s Stay True.

I read twenty-nine books that year, twenty-nine more than I’d read the year before. I picked up weights for the first time in a long while. A poem came to me in a quiet moment and I jotted it down. I grabbed a brush and painted watercolors with my kids. I went on walks, I smiled at babies and said god bless you to sneezing dogs.

At no point did I feel a light bulb turn on. It was a long walk. One foot, one page, after the other.

What I had come to was a me that I’d forgotten. The path back had been so haphazard, accidental. Just a spark that turned into a practice. Is this how it’s supposed to be?

When my daughter is bored or warding off bedtime, she’ll wrap herself around my arm and ask for a story about her favorite invention of mine, Princess Buttercup—a very purple princess with butterfly wings.

When she was younger, the stories were simple and decorous. Princess Buttercup wants to forage for strawberries, oh no there’s a puddle, oh wait she has wings. Princess Buttercup wants to go to her friend’s bubblegum tea party, oh no she forgot to buy a gift, oh wait she bakes cookies with her dad.

As my daughter grows, so do the stories. Now, Buttercup’s people are suffering from famine. A fire-breathing dragon is hoarding the goods they bring in from nearby kingdoms, causing the local economy to collapse. Buttercup climbs the dragon’s peak to try and reason with him. But he turns his flame on her and she barely escapes. Meanwhile, a peasant girl also braves the dragon’s lair and discovers that there are some crops it cannot eat; they could learn to grow them. It will mean a hard first winter, and changing long-standing traditions, and no cookies, but they can survive. She informs a despondent Buttercup, who must now pick herself up and lead her people.

My daughter sometimes carpools to ballet with her friend’s mom, who recently revealed to me that my daughter tells the girls stories. These stories aren’t coming from books she’s read or variations on mine; they’re wholly hers. Their favorite story is about Chomp-Chomp, a half-monster/half-dinosaur that eats kids’ shoes. It’s the highlight of their ride.

When the friction of production disappears, it can no longer protect us from the question of what to produce.

Tools change. Temptations are evergreen. But what is real is not the tool or its output or the system it inhabits; it is the capacity that precedes them. Imagination allows us to explore; exploration reveals potential. Intention focuses that vision and the creative act fulfills it. This is what persists. This is what adapts. Everything else is simulacra.

Refuse, then build and rebuild. In Foucault’s words: “Maybe the target nowadays is not to discover what we are but to refuse what we are. We have to imagine and to build up what we could be.”8

In the world that shaped us, creativity was not mere luxury, it was survival. The western apes who found comfortable niches stopped exploring and became chimpanzees. The eastern apes adapted to relentless ecological pressure through creation: new tools, new structures, new ways of thinking, new forms of culture.

Terms like “lizard brain” offer the false impression that our deepest motivations are fear and hunger. But the creative act is also ancient. The apes at Lomekwi were imagining and creating tools one million years before an ape could control a flame. Two million years before they could form sentences. Creation is primal.9

I’m not sure the ape at Lomekwi had a plan, at least not in the beginning. But they had to imagine the edge. And, perhaps after some trial and an abundance of error, they brought the stone down with intention.

Today, if you were to wander into the forests of New York, you may spy a different set of apes: my children. Modern apes still bang stones. They invent words and songs too. They can’t climb very well, but they fill the canopy with their laughter and screams. They draw towering smoke and leaf monsters and paint watercolors of bugs with a hundred and three legs.

What will they create next? The first step is always the same: imagine something that isn’t.

“On record” being the operative phrase. You may have heard of the “carrier bag theory,” advanced by Elizabeth Fisher and the wonderful Ursula K. Le Guin, which suggests that the first tools were likely soft and organic (bags, slings, nets). In other words, made from materials that don’t survive in the archaeological record. If they’re right, the creative act is even more ancient.

From Foucault’s The Subject and Power (1982).

Graeber goes on to explore the trajectory of human creativity in The Dawn of Everything: A New History of Humanity (2021). It’s on my reading list for this year, I’m excited to see where we agree and disagree.

Shout out to @louisamunchtheory.

Bioinformatics friends: yes I know it’s a variant caller. “Mutation detector” is just more fun.

On February 22, 2026, Citrini Research published “The 2028 Global Intelligence Crisis,” a scenario imagining AI-driven mass unemployment and economic collapse by 2028. In part due to that, in part due to general wariness, markets sold off sharply the following Monday. The collective response was not to imagine alternatives, but to retreat. This is bias playing out at massive scale in real time.

From Jesus’ Son by Denis Johnson.

The Subject and Power (1982).

Lomekwian tools were discovered at the Lomekwi 3 site in West Turkana, Kenya in 2015 and date to ~3.3 million years ago. This makes them the oldest known stone tools. The earliest evidence of controlled fire use comes from Wonderwerk Cave, South Africa, ~1mya (though some contested claims push to 1.5mya). The emergence of syntactic language is debated but most estimates place it within the last 100k-200k years.

Thank you to Rohit Kaushik and Emily Kaushik for preventing me from publishing a much worse essay.

Necessity is the mother of invention.An old saying