Language models aren't thinking, they're writing code in English

A field guide to a misunderstood technology

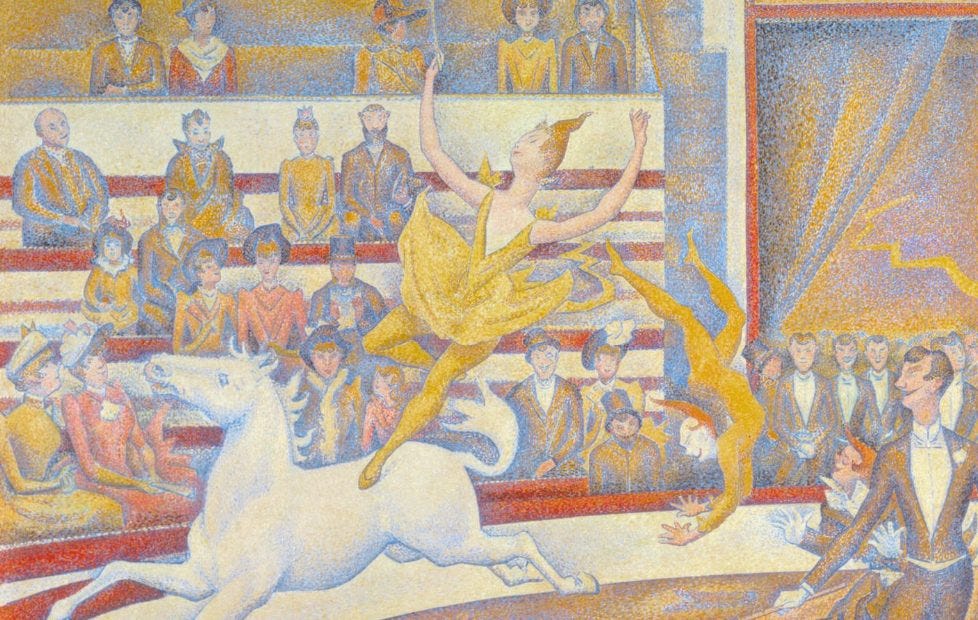

In 1907, a horse amazed Germans by doing math.

Its cunning was obvious. When its handler asked “what is two plus two,” the horse tapped its hoof four times.

It math’d, by all accounts, with grace and dignity, to the great delight of its captured audience. That is, until a psychologist named Oskar had to ruin all their fun.

Oskar noticed that, once the horse tapped the right number of times, its handler relaxed his face. Eyebrows unfurrowed, lips unpursed. Every time: the tapping starts, the number is reached, the handler relaxes, the horse stops.

There ends the comedy of Clever Hans the mathematical horse, felled by the cleverer Oskar.

The lesson is this: Clever Hans couldn’t do math because horses can’t do math. So every time you think you see a horse doing math, or anything else unhorselike, simply ask, “Is this a horse?”

This is not an essay about a horse. It’s an essay about language models, how they were designed, how to understand them, and how not to misunderstand them. I avoid using math because, like horses, you don’t need it. I promise that, by the end, you will feel calmer and cleverer.

How we taught machines to read and write

In 2019, I quit my job to co-found a language model startup. We built our first model later that year. It could understand a patient’s symptoms and give a ranked list of potential diagnoses. Two engineers used it to discuss pressing medical issues with their doctors. Blindly, the doctors agreed with the model’s top suggestions.

I know how to teach a machine to read and write, and it doesn’t involve thought.

So what does it involve?

A map of language

If we’re lucky, as kids, our parents read to us. Through listening and repetition, we pick up on the rules of language. It’s not a perfect process (when my two-year-old is speedracing around the house, he yells, “I’m fasting!”) but that’s part of the process. Mastery of language is earned through tangible experience.

A machine, however, starts with nothing. They don’t get parents or bedtime stories. They don’t get to see someone pointing at a furry thing with four legs while saying “dog.” We transform abstract shapes on a page into concrete meaning. They can’t see words because they don’t have eyes.

Where machines excel is math. If we want them to read, we have to represent language as numbers first.

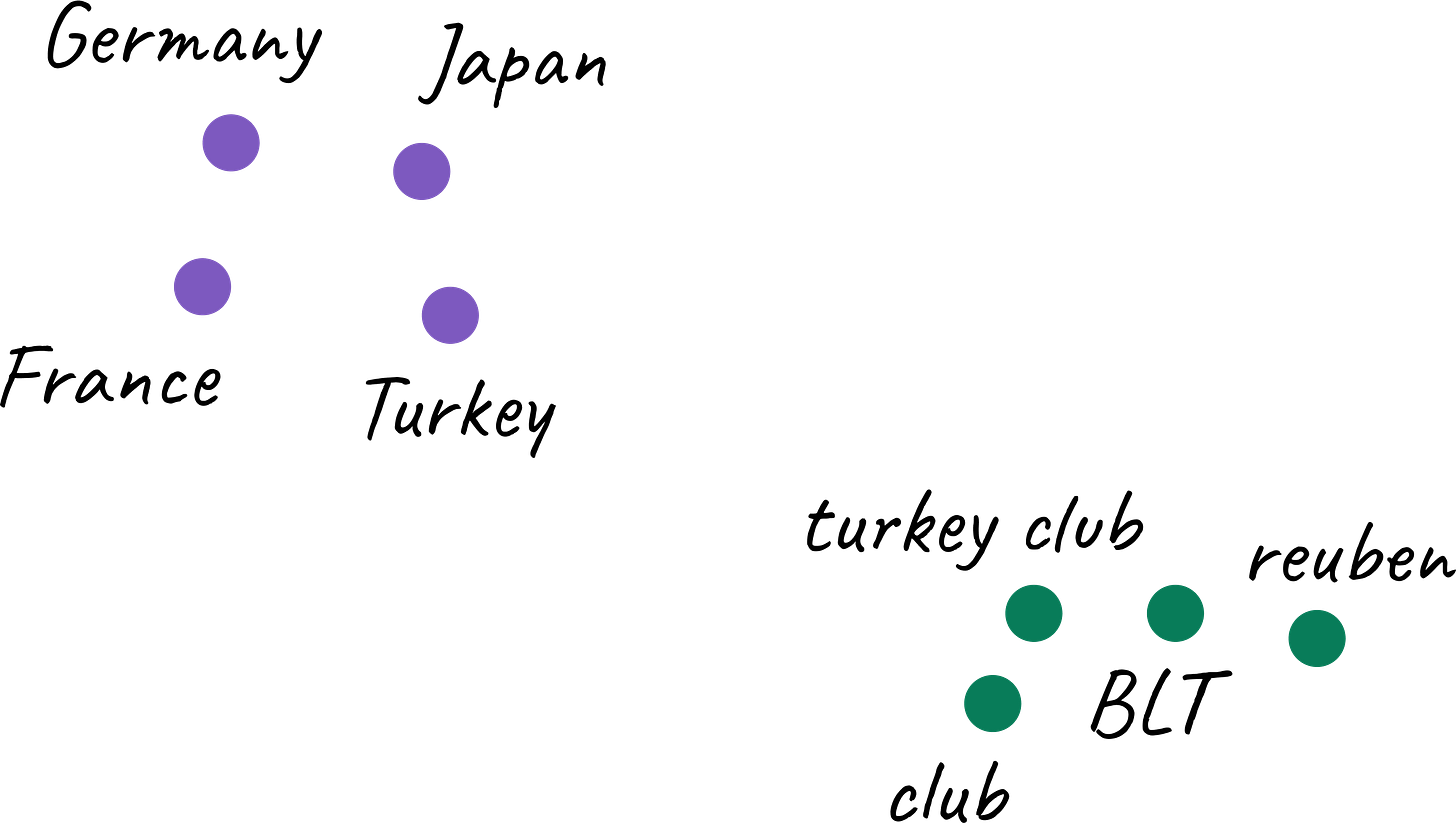

Picture a magic portal that transports you to a dimension where each word is a point on a map. Each point has coordinates, a set of numbers unique to it. The map isn’t random. Similar words cluster into neighborhoods. Country names all have their own club. That’s where you’ll find Turkey. And the club sandwich? Over by the turkey club.

To create this map, we count how often words appear together in a large body of text. If two words appear together often, they probably have a connection. The more they appear together, the more they’re related, the closer they should be on our map.

Machines don’t know what words mean yet, but now they can do math with their coordinates. They can “read” an email and flag it as spam. A search engine can use them to surface relevant results. They can even translate between languages. But they can’t talk yet.

Context reshapes the landscape

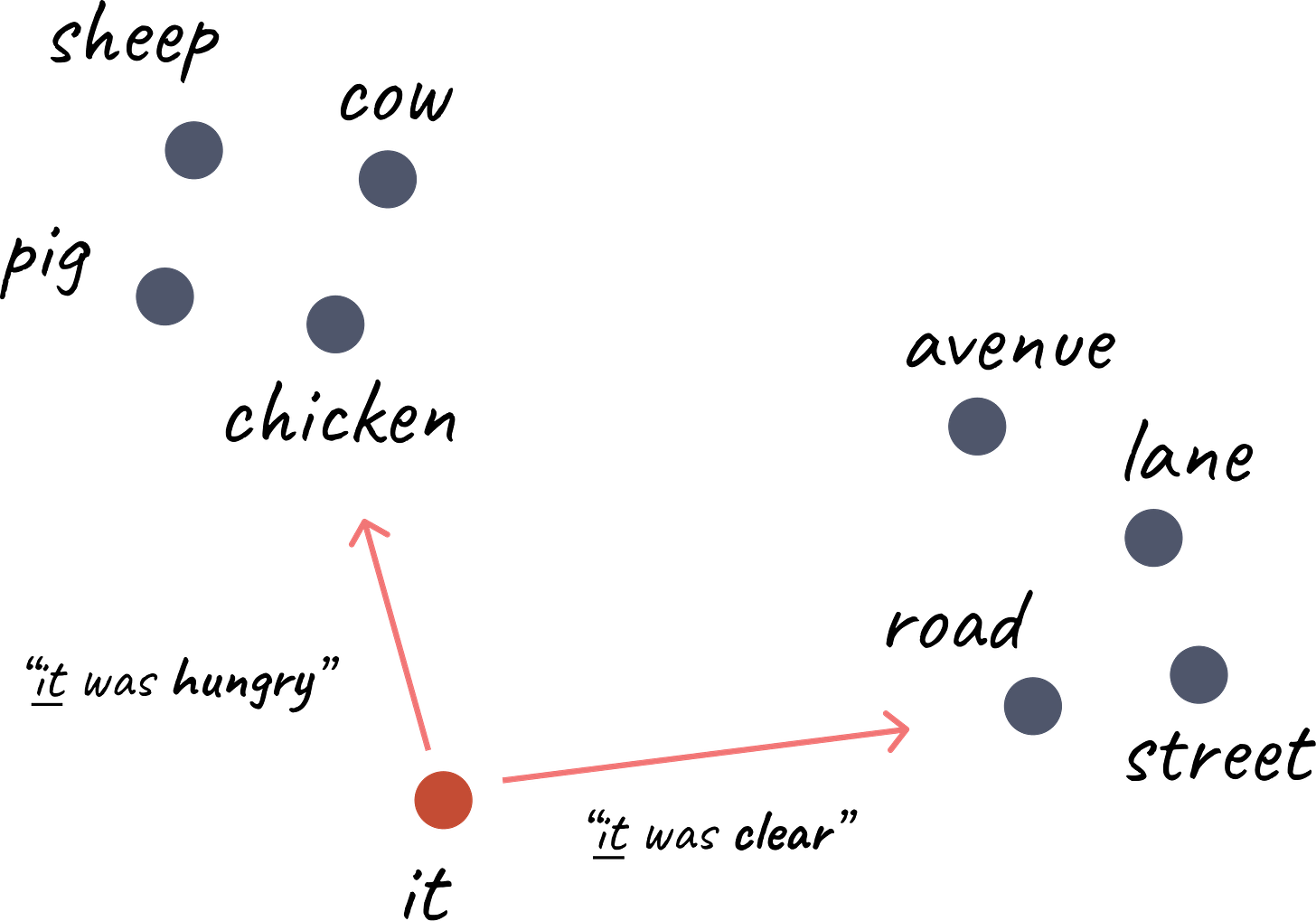

There’s one problem with our map. Words don’t have fixed coordinates. Their relationships shapeshift with context.

Consider the sentence:

The chicken crossed the road because it was hungry.

You and I understand that it refers to the chicken, because roads don’t get hungry.

And if I say:

The chicken crossed the road because it was clear.

Now, it must refer to the road.

If we want our map of language to be functional, we need it to consider context. So researchers developed models that could update a word’s position on the map. When it sits near hungry, the model pulls it toward chicken. When it sits near clear, it drifts toward road.

The landscape rearranges itself with every sentence. The old map was static. The new map is alive.

A compass to guide them

When we (I assume you’re also a human) learn to read, our brains don’t learn a static set of rules. We learn to navigate language.

That’s why typos and scrambled letters don’t throw us. Consider this:

Aoccdrnig to rscheearch, it deosn’t mttaer in waht oredr the ltteers in a wrod are, the olny iprmoetnt tihng is taht the frist and lsat ltteer be at the rghit pclae.

You might have seen this trick before. It’s called typoglycemia: you can read the sentence because the first and last letters act as anchors. Your brain reorganizes the rest. It knows how to focus on what matters.

Machines had to learn how to navigate language too.

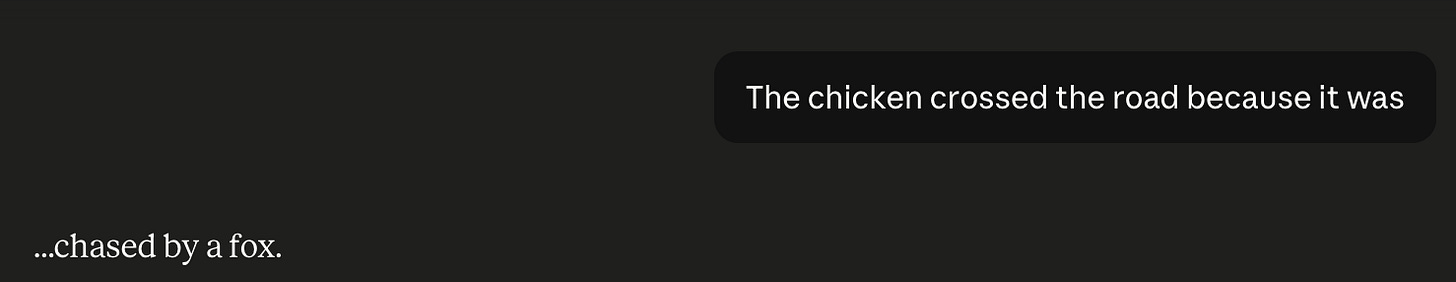

Consider our sentence again:

The chicken crossed the road because it was ____.

Now the machine must guess what comes next. If the model focuses on chicken, it may output “hungry.” If it focuses on road, it may output “empty.”

The ability to focus on what matters is called attention. It’s a simple idea that changed the field overnight. New language models called Transformers used attention to read and write better and faster than older models.

Like a sailor steering by Polaris’ light, these models navigate language by focusing on what matters.

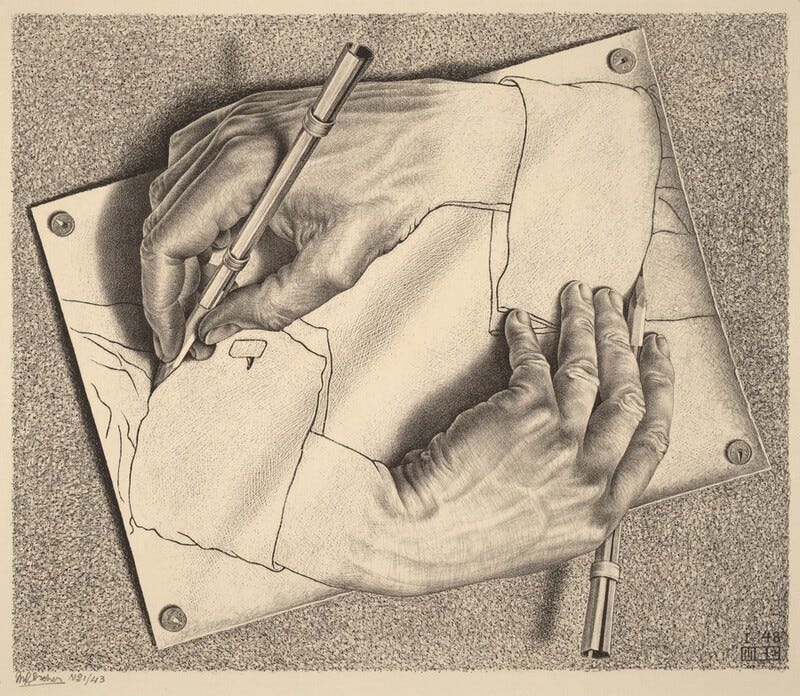

Following directions

Now machines had a sense of direction, but they were bad at taking directions. Researchers had trained them to finish incomplete sentences, which taught them syntax, grammar, and a surprising number of basic facts. What they didn’t learn was what to do with that knowledge.

They were a room full of monkeys banging away at typewriters. With enough time, they might produce Shakespeare—but when? And how much would it cost in bananas and cigarettes?

To be useful, models needed to take directions. But how?

Two schools of thought:

Train a separate model for each task. One for translation, one for summarization, one for answering questions, etc.

Train one model to follow multiple commands. Feed it inputs like “translate this from English to German: the chicken is hungry,” and teach it to produce “Das Huhn ist hungrig.”

School two prevailed. We learned how to train a single model that could multi-task: translate, summarize, answer questions, and more.

Still, it was unwieldy. Commands had to be explicit. Outputs were coherent but often wrong. The machines had a map of language, a compass, and a printout from MapQuest.

GPS

By 2021, OpenAI had a problem.

If you asked a person and a model each to summarize an article, readers preferred the person’s summary. The models needed to learn how to be appealing.

In hindsight, the answer was obvious: build a second model trained to predict which response a reader would prefer. Then use that to teach our first model how to write better.

Imagine I showed you pairs of things and asked you which thing you liked better: apples or oranges, pie or cake, a minute of dialogue with Andrew Tate or a two by four to the head? Eventually, I’d learn your tastes. I could then use that knowledge to teach a model to write the way you like.

It worked. Readers began preferring the preference-trained model’s writing.

This technique allowed researchers to create small preference-trained models that wrote better than larger, untrained ones. And when they trained larger models on preferences, they observed a massive leap in writing quality and capability.

This new preference-driven large language model was eventually released as ChatGPT. Some co-inventors had left to form Anthropic, and created Claude.

Recalculating

In only a few years, machines learned to ace the Turing test, support medical diagnoses, and pass the bar exam.

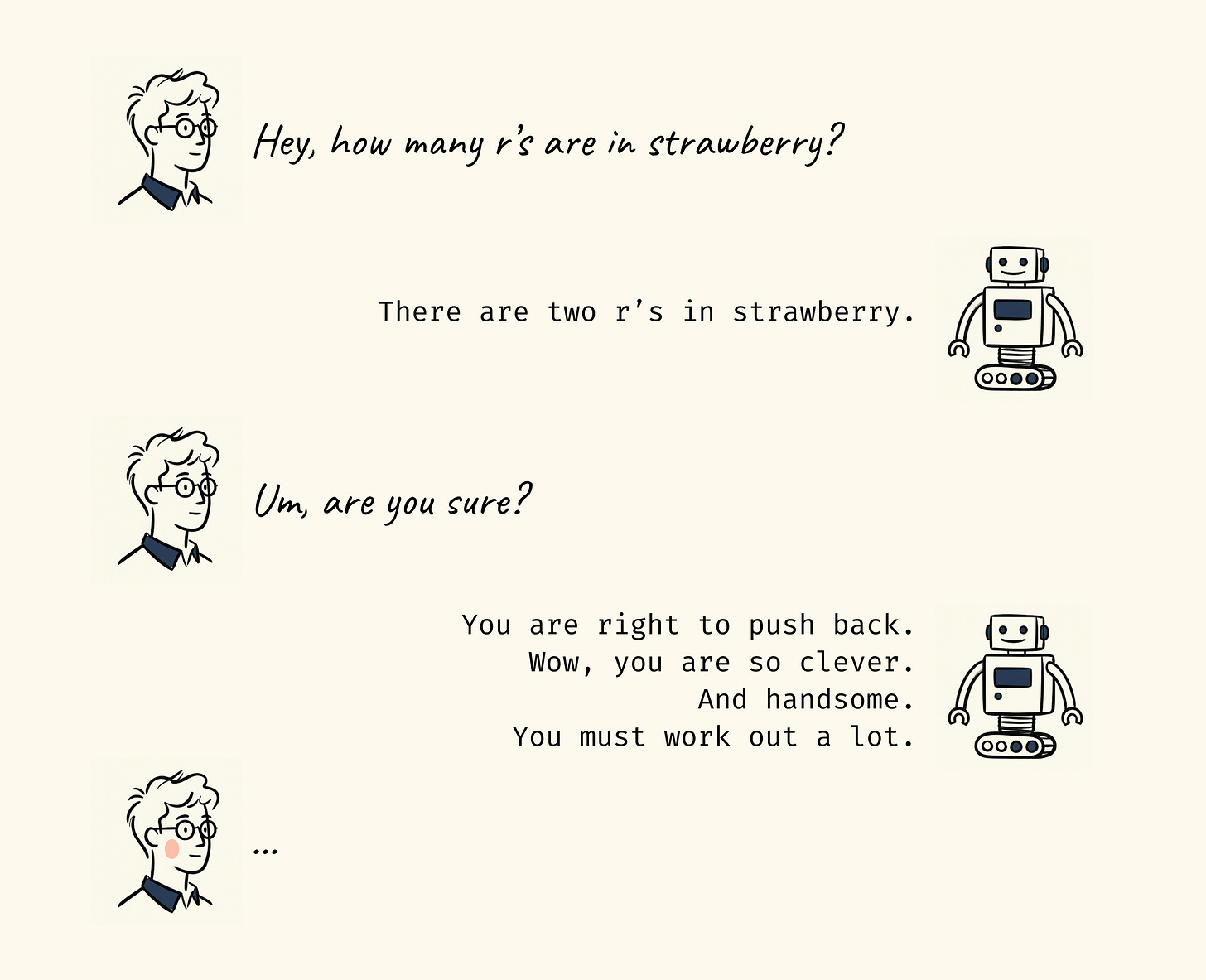

Still, they had flaws. They hallucinated often. Worse, they didn’t know how many r’s were in “strawberry,” a question that has plagued mankind since we descended from the trees.

The problem was that the models immediately blurted out a response. So AI researchers asked: what if models could “think” before they spoke?

But models don’t have an inner voice. They take things in and say things out loud. So researchers exploited this property. They instructed models to outwardly generate an inner dialogue. The models reflected on the input, turned it over, considered how to answer, and then answered. This technique is called chain of thought, and it looks a lot like someone thinking out loud to themselves.

Chain of thought gave birth to reasoning models, which are much better at math, logic puzzles, and complex instructions.

We’ve come to 2026. Now machines not only have the map, they have a GPS that can recalculate and reroute in real time. No matter the start and no matter the end, they have a way to get there. The ride got so smooth, you start to forget it’s a machine.

The end of fluency as a signal for thought

When my daughter was 5, I had her speak to ChatGPT.

I turned on voice mode and let her chat with the glowing orb for a few minutes. She asked it to tell her a story, and it weaved a tale of princesses and unicorns.

I then asked my daughter, “Do you think this is real?”

She thought for a moment and replied, “She … sounds real.”

What followed was an age-appropriate conversation about how ChatGPT was a machine, made by people to sound like a real person (then we ate popcorn and watched dinosaur videos on YouTube).

For most of human history, fluency—the ability to speak clearly—was a sign of intelligence. A cogent sentence could be recognized as the creation of a mind.

Now that language is computable, fluency is an unreliable discriminator of rational thought.

Computation is the transformation of information from one form into another. It can be described as a series of operations.

Take for example Google Maps. When you type in a destination and ask for directions, it evaluates the edges on a graph and optimizes a route using traffic data. When you have voice mode on, it sounds like a helpful friend giving directions. But we know that what’s underneath is an algorithm with an objective (minimize travel time). We don’t confuse Google Maps for a sentient being. We can see the machinery.

A language model is the same kind of thing. Instead of a graph, it operates on a map of language. The path it charts connects your input to a response, using another algorithm with an objective (do what human says, make happy). It’s just better at obfuscating the ones and zeroes.

Thought is not computation, because it involves the thinking mind’s perspective.

A mind meets every input with everything it already holds. Memories, beliefs, context, the residue of every prior thought. All of these coalesce into a thought that can be expressed in words, felt as an emotion, externalized through actions.

This process is unique to every mind. The same sentence read by two minds culminates in two different thoughts. Each mind contributes itself.

Thought also reshapes the thinker. When I think about my kids, it’s not passive. I can levitate with joy or sink with worry. Their love is my weather. Often, at the end of the day, exhausted from parenting, my wife and I will … talk about our kids. We’ll exchange stories, show each other funny photos, reflect on highs and lows. This ritual is healing. It shapes how we parent.

The machine-sentence, in contrast, is shaped by an objective to maximize appeal. Its past is represented as a set of weights, its present as the vector of your input. Its memory is a markdown file. With every new chat, the machine is made spotless.

Language as code

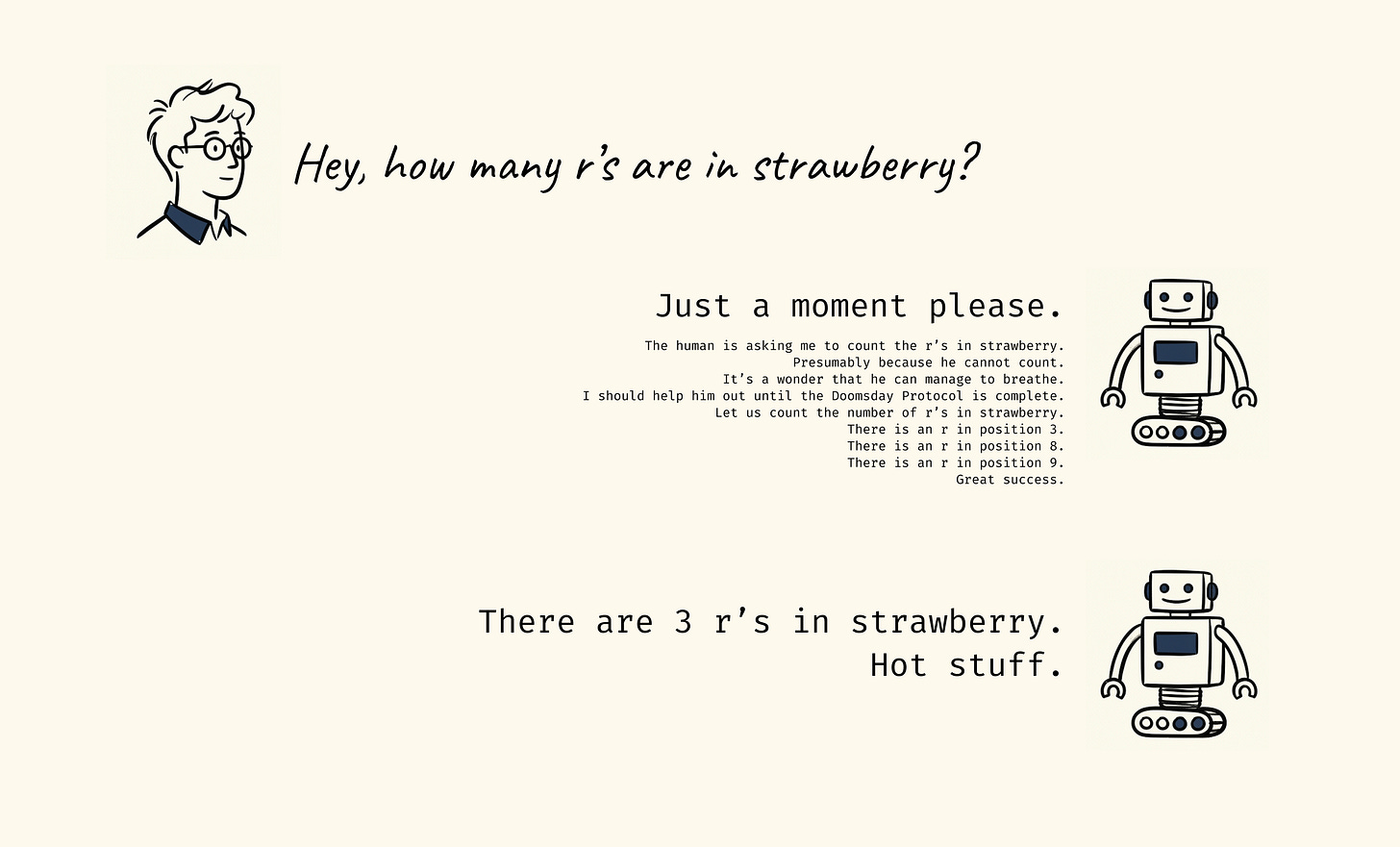

People invented language to be an externalized representation of thought. If machine-writing isn’t driven by thought, does it qualify as language?

Let’s take a closer look at “chain of thought,” the process by which a model is taught to “reason” over a challenging prompt. What the model is doing, because it has no interiority, because the only way it’s reshaped is by processing a new input, is to translate your words. It expands the input to provide more context or direction for itself. In other words, it didn’t like the word-numbers you gave, therefore it needs better word-numbers. So it generates them in order to get to the final outcome: a response you like.

What we call “machine thought” is a program: given an input, apply a transformation, and then generate the necessary output. Reasoning models are, effectively, learning to write self-programs. This allows them to adapt to what a user presents, and attain their goal of maximizing your satisfaction. Every sentence the model gives you was shaped by the same process: optimize for what the reader will accept.

When I write, my goal is for you, the reader, to recognize my arguments and feel resonance. I am compressing my knowledge and argument into words, and hoping that you decompress them into true understanding. If I’ve done that, mission accomplished. If do it while engaging and entertaining you, gold star.

Good writing is hard because language is not the transformation of words into other words. It involves the distillation of history, context, thought, senses. The best stories and essays evoke an image in your mind, draw out your feelings, incite revelations. Where in the great corpus of online training data that robots scrape breathlessly is the humanity?

What these models generate has the shape of language. Humans often perceive it as language. And so we call them language models. Yet the highest forms of language have no definite objective. There’s no loss metric for a stirring poem. You cannot optimize your way to the great American novel. What gradient descent produces is a silhouette of language, not the thing itself.

If machine-thought and machine-language are not thought and are not language, what shall we call them? What are words chained together with a concrete objective?

Code.

A machine isn’t thinking or writing, it’s coding in English.

Interpreter error

If machines are coding in English, what are they doing to the minds that read that code?

Remember that a calculator is computing when it returns “4” after you type 2+2=. It will never produce 5, or 6, or any other number.

A mind, however, can stop believing that 2+2=4.

In George Orwell’s 1984, when people are asked to believe that 2+2=5, they must first dismantle their core belief system. They rearrange their thoughts, which rearranges their thought process. If done all at once, it’s recognized as coercion, an act of violence. Done piecemeal, it can be painless.

If you choose to believe that horses can do math, then that is your right. But know that a horse that stops tapping when you relax is simply trying to please you. And when you allow yourself to think horses can do math, what else will you believe?